Anna Rosenberg, John Kennedy, Zohar Keidar, Yehoshua Y. Zeevi and Guy Gilboa, Philosophical Transactions of the Royal Society A, 2025.

Abstract

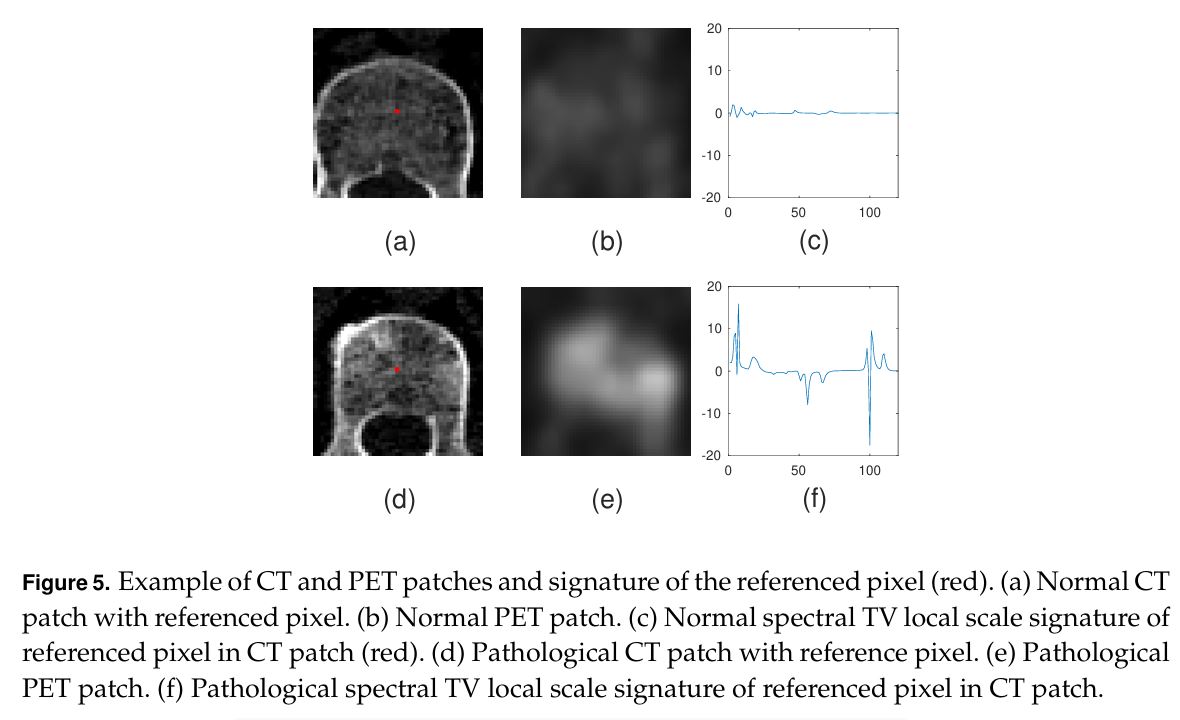

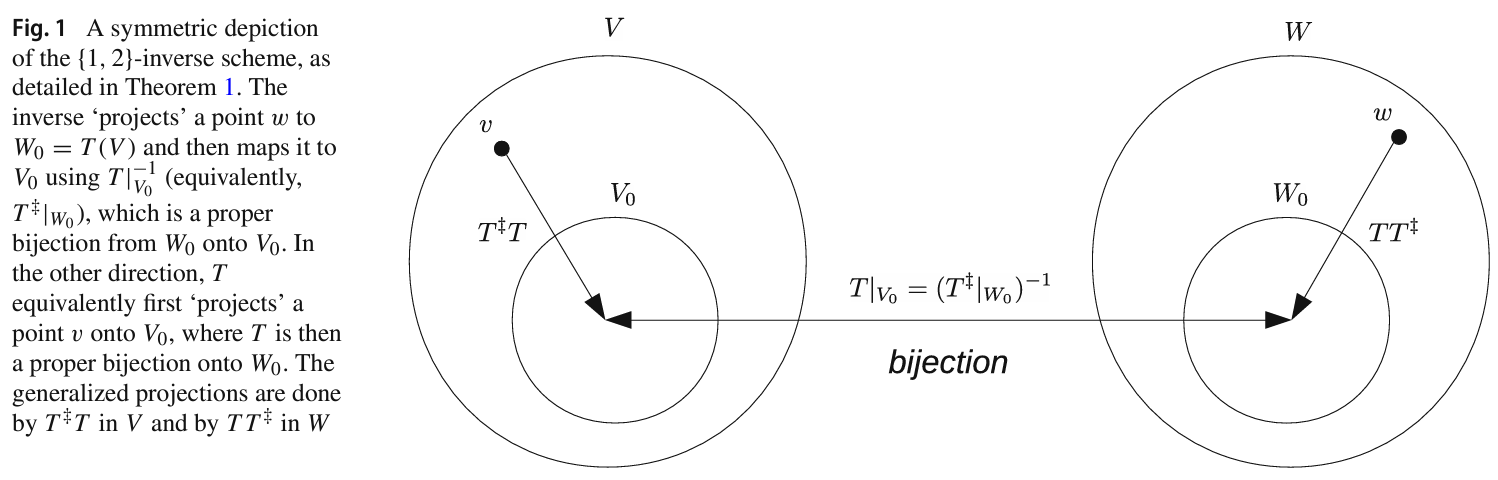

Solving computer vision problems through machine learning, one often encounters lack of sufficient training data. To mitigate this, we propose the use of ensembles of weak learners based on spectral total-variation (STV) features (Gilboa G. 2014 A total variation spectral framework for scale and texture analysis. SIAM J. Imaging Sci. 7, 1937–1961. (doi:10.1137/130930704)). The features are related to nonlinear eigenfunctions of the total-variation subgradient and can characterize well textures at various scales. It was shown (Burger M, Gilboa G, Moeller M, Eckardt L, Cremers D. 2016 Spectral decompositions using one-homogeneous functionals. SIAM J. Imaging Sci. 9, 1374–1408. (doi:10.1137/15m1054687)) that, in the one-dimensional case, orthogonal features are generated, whereas in two dimensions the features are empirically lowly correlated. Ensemble learning theory advocates the use of lowly correlated weak learners. We thus propose here to design ensembles using learners based on STV features. To show the effectiveness of this paradigm, we examine a hard real-world medical imaging problem: the predictive value of computed tomography (CT) data for high uptake in positron emission tomography (PET) for patients suspected of skeletal metastases. The database consists of 457 scans with 1524 unique pairs of registered CT and PET slices. Our approach is compared with deep-learning methods and to radiomics features, showing STV learners perform best (AUC=0.87), compared with neural nets (AUC=0.75) and radiomics (AUC=0.79). We observe that fine STV scales in CT images are especially indicative of the presence of high uptake in PET.